Screens that

disappear.

One job: stay out of the way. Every design decision was stress-tested against a single question: does this make the user think about the technology, or about how they feel? Biometrics are visible but secondary. Audio controls are primary and always accessible.

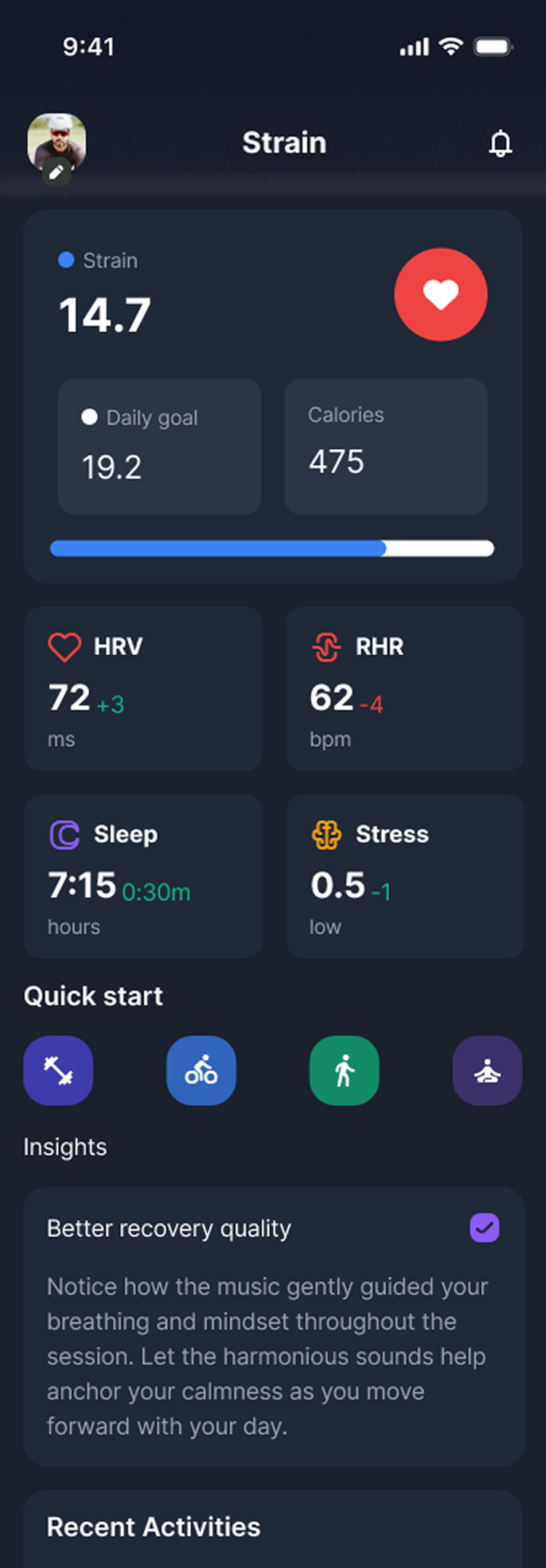

Strain · Activity Dashboard

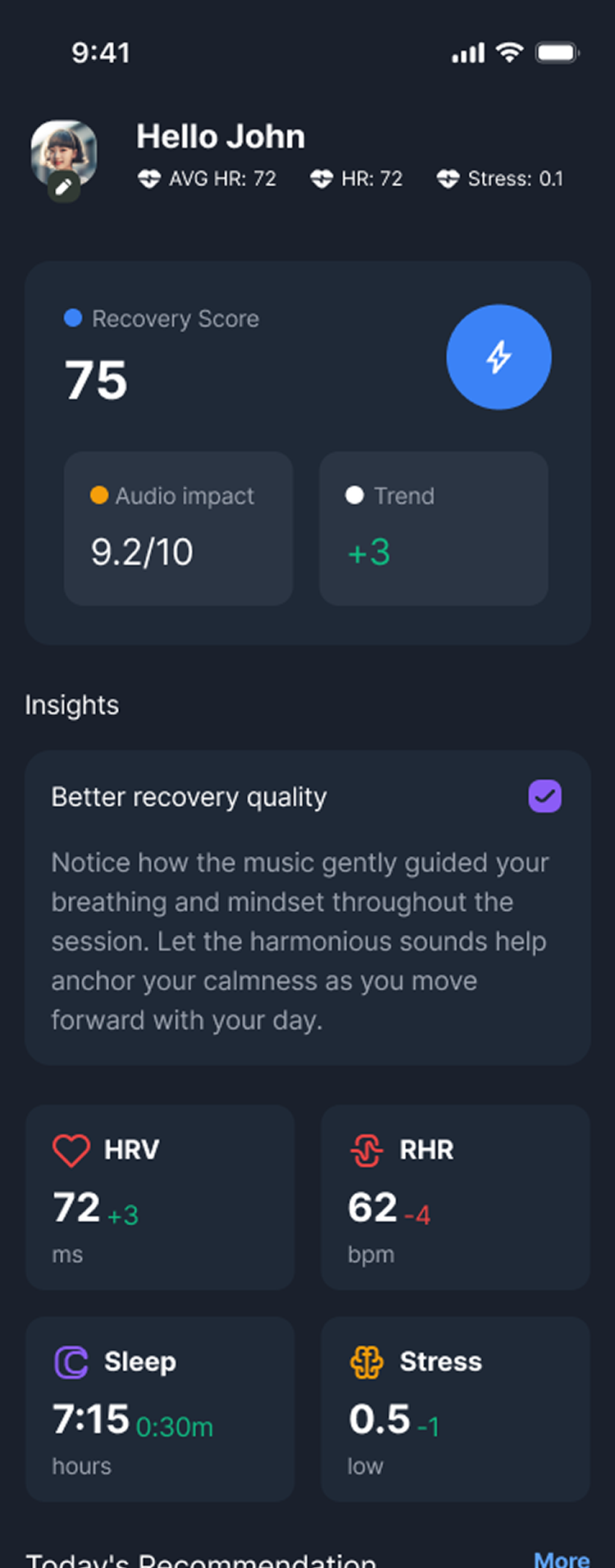

Recovery · HRV Dashboard

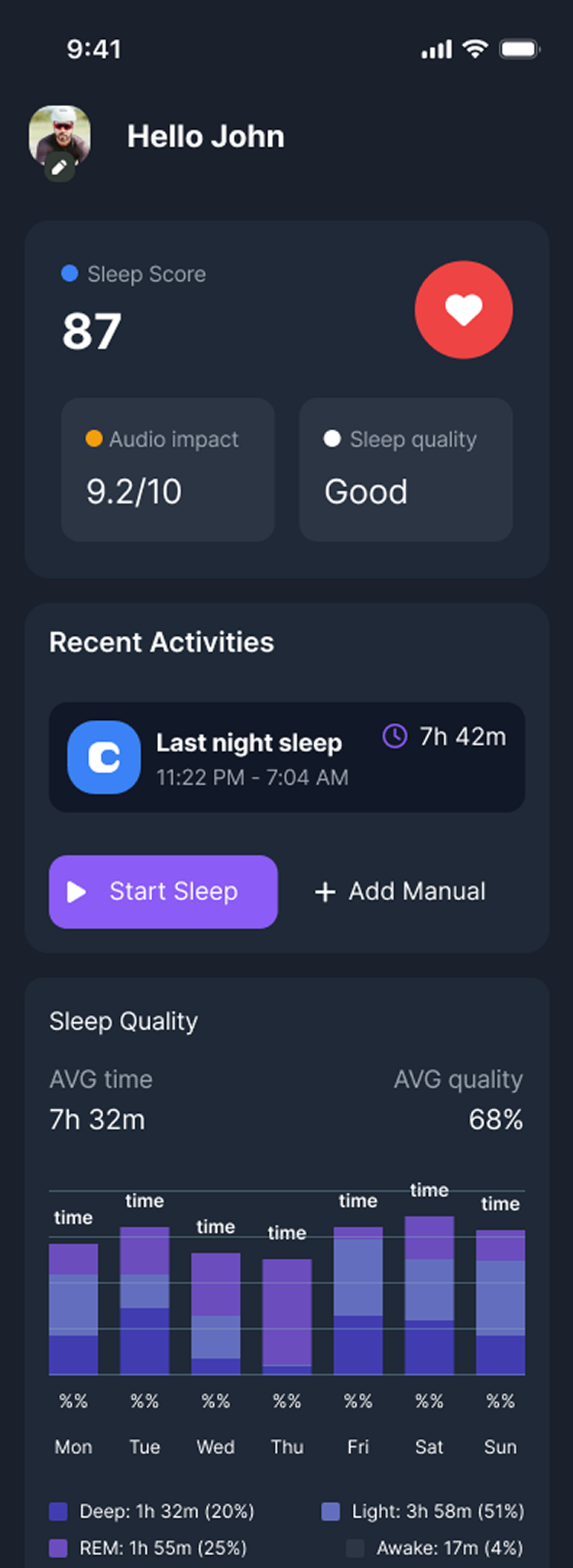

Sleep · Passive Dashboard

Focus · Binaural Mode

Complete screen set across all four modes. Consistent spatial hierarchy throughout: biometrics secondary, audio controls always primary and accessible.